As artificial intelligence continues to evolve, GPUs (Graphics Processing Units) have become the backbone of high-performance computing. Among the most sought-after options today are the NVIDIA H100 and the NVIDIA H200. For businesses and developers, understanding how these GPUs are priced—and what drives those costs—is essential for making informed infrastructure decisions.

Why GPU Pricing Matters

GPU costs can significantly impact the overall budget of AI projects, especially for training large models or running high-volume inference workloads. Whether you are a startup or an enterprise, choosing the right GPU can mean the difference between cost efficiency and overspending.

Overview of H100 and H200 GPUs

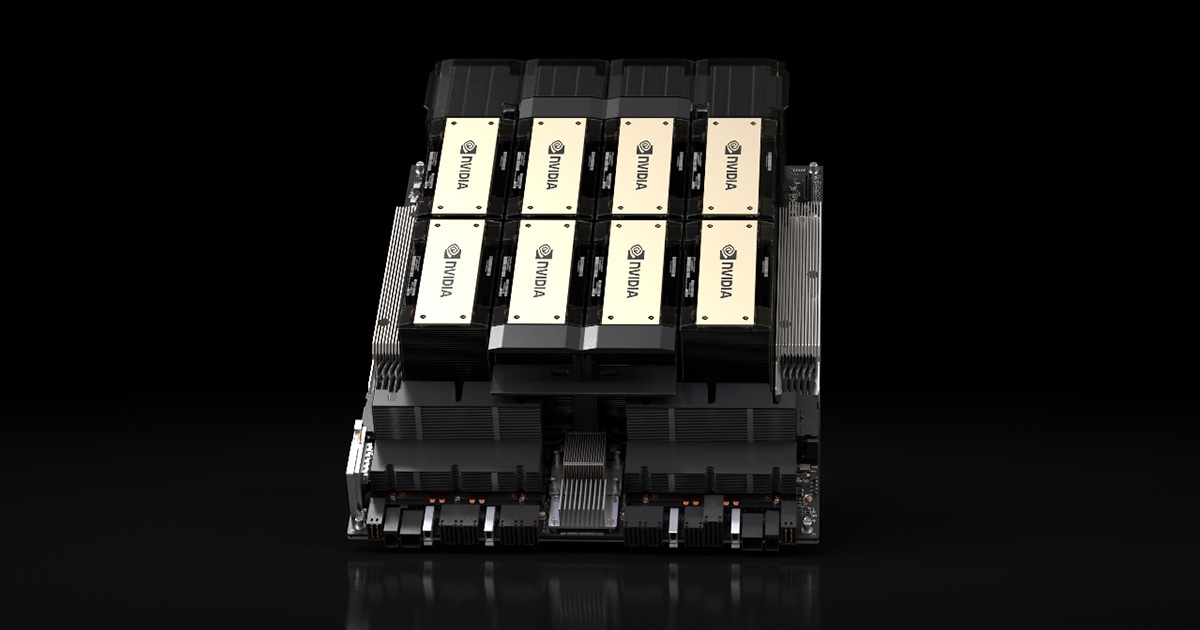

The H100, based on NVIDIA’s Hopper architecture, is widely used for AI training and inference. It offers strong performance, high memory bandwidth, and advanced capabilities for large-scale machine learning tasks.

The newer H200 builds on this foundation, introducing higher memory capacity and faster data handling. This makes it particularly suitable for memory-intensive applications such as large language models and complex simulations.

Key Factors That Influence GPU Pricing

1. Hardware Performance

One of the biggest drivers of cost is raw performance. The H200 typically commands a higher price due to its improved memory and bandwidth. For businesses running demanding workloads, the added performance can justify the extra expense.

2. Memory Capacity

Memory plays a crucial role in AI workloads. The H200 offers significantly more memory than the H100, allowing it to handle larger datasets and models without splitting tasks across multiple GPUs. This can reduce complexity but increases upfront cost.

3. Availability and Demand

GPU pricing is heavily influenced by market demand. As AI adoption grows, high-end GPUs like the H100 and H200 are often in short supply. This can lead to fluctuating prices, especially in cloud environments where resources are shared.

4. Cloud vs On-Premise Pricing

Most businesses access these GPUs through cloud providers rather than purchasing hardware outright.

Cloud pricing:

Typically charged per hour, with costs varying based on region and demand

On-premise pricing:

Requires significant upfront investment but may be more cost-effective for long-term, consistent usage

The H200, being newer and more advanced, generally comes at a premium in both scenarios.

Cost Comparison: H100 vs H200

While exact pricing varies, some general trends can help guide decisions:

1. H100:

More widely available and slightly more affordable, making it a popular choice for many AI applications

2. H200:

Higher cost but better suited for memory-heavy workloads and next-generation AI models

For many organisations, the choice comes down to whether the performance gains of the H200 justify its higher price.

Why Choose H100

- You need reliable performance at a relatively lower cost

- Your workloads are not extremely memory-intensive

- You want a proven, widely supported GPU

- It is often the go-to choice for startups and mid-sized AI projects.

Conclusion

For businesses navigating the fast-growing AI landscape, making the right choice between these GPUs can optimise both performance and budget. By carefully evaluating your requirements, you can invest in the solution that delivers the best long-term value.